Key Messages

- Physical and digital documents are used everywhere, and they play a key role in connecting many business processes.

- Most unstructured information is locked in documents.

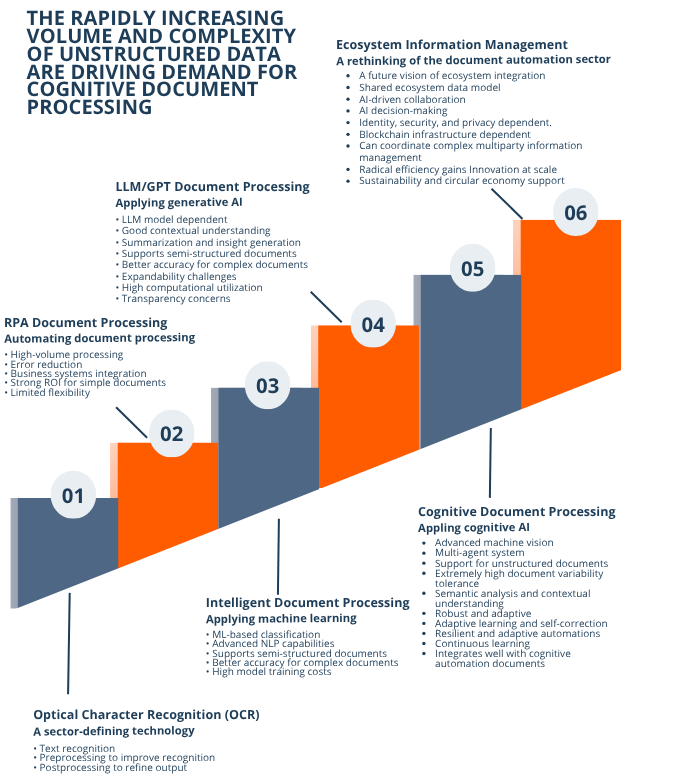

- Document processing technology has been advancing through six generations, from optical character recognition to ecosystem information management.

- The latest generation, cognitive document processing, uses cognitive AI to understand context and data element relationships to extract information from documents and handle variability with zero-shot setup.

- Adopting advanced document processing improves business outcomes including adaptability, transparency, auditability, and lower maintenance costs.

Documents are fundamental to almost all business and governmental operations. They are used to encapsulate information, convey instructions, and record transactions. Document processing involves extracting their content, understanding their meaning and significance, and transferring their information to business systems.

Introduction

Documents are fundamental to almost all business and governmental operations. They are used to encapsulate information, convey instructions, and record transactions. Document processing involves extracting their content, understanding their meaning and significance, and transferring their information to business systems.

Documents can be physical and digital, and there are a lot of them. The global trade sector alone creates approximately 4 billion paper documents a day. It is estimated that there are more than 2.5 trillion PDF documents in the world.

In 2025 it is forecasted that we will generate 181 zettabytes of new data and 80 to 90 percent of that will be unstructured. Considering the massive volume of documents and their critical importance to the functioning of business processes, it is not surprising that businesses and governments have invested huge effort to digitise and automate document handling and processing.

The Evolution of Document Processing

Until the late 1980s, documents were processed entirely by people. Humans are highly adept at interpreting documents, improving with experience and requiring little formal training to adapt to new document types. They are flexible and capable of handling significant variability. However, people are also expensive resources, and their output, both in quality and throughput, can vary widely. Moreover, the volume of documents eventually exceeded the capacity of manual workforces to keep pace. With the rise of computers and the internet, organisations turned to technology to digitise documents and manage them at scale more efficiently and affordably.

Over the past thirty years, document processing has advanced through several waves of innovation. These include breakthroughs in optical character recognition, the introduction of machine learning for more accurate classification, and the integration of natural language processing to enhance data extraction. Each generation has built on the strengths of the last, evolving document processing from keyboard-driven digital transcription into a fully automated and integrated component of complex business processes.

Today, document processing is a core enabler of digital transformation. It accelerates the handling of critical business information, reduces costs, increases automation, and enhances decision-making. Organisations now recognise that documents, whether paper or electronic, structured, semi-structured, or unstructured, contain vital business intelligence. When captured, organised, and understood, this information becomes a powerful business asset.

This paper examines the evolution of the five most recent generations of document processing technologies: OCR, robotic process automation document processing, intelligent document processing, GPT/large language model document processing, and cognitive document processing. It also looks ahead to the potential emergence of ecosystem information management.

Generation 1: OCR

Optical Character Recognition detects characters in document images by analysing their shapes, converting them into machine-readable text that can be used in digital systems.

Preprocessing

After a document is captured, preprocessing techniques such as noise removal, de-skewing, and binarisation are applied to enhance clarity and improve recognition accuracy.

Postprocessing

Once characters are recognised, postprocessing methods like spell-checking, dictionary-based corrections, and natural language processing are used to refine the output and correct errors.

Strengths

Traditional OCR performs well on clean, printed text in standard fonts. Although it struggles with noisy or unstructured documents, OCR still marked a major leap in efficiency, enabling faster document processing, improved searchability, and significant cost reduction compared to manual methods.

Limitations

OCR is far less effective for semi-structured, unstructured, or handwritten text. As a result, its standalone utility is limited in many real-world document processing scenarios without the support of complementary technologies.

Key Characteristics of OCR

- Text recognition: Converts printed or handwritten text into machine-readable formats.

- Image preprocessing: Enhances input quality through techniques such as noise reduction, de-skewing, and binarisation.

- Pattern and feature recognition: Identifies characters and symbols based on their shapes and structures.

- Language and font versatility: Supports multiple languages, character sets, and font styles.

- Flexible output formats: Delivers results in formats such as plain text, searchable PDFs, or structured data.

- High scalability: Processes large document volumes efficiently across industries.

- Pre- and postprocessing support: Incorporates spell-checking, dictionary corrections, NLP, and other refinements to improve accuracy.

Example Use Cases for OCR

Document Digitisation for Archiving: An insurance company uses OCR to convert physical documents such as manuals, contracts, and records into digital formats. This reduces reliance on physical storage while making critical information more searchable and accessible across the organisation.

Automated Data Entry from Forms: A shipping company applies OCR to extract text automatically from structured forms including invoices, receipts, and applications. This automation eliminates manual data entry, saving time, reducing errors, and improving operational efficiency.

Generation 2: Robotic Process Automation Document Processing

The digitisation of documents is not only a standalone process but also a critical part of broader business workflows. RPA offered an efficient way to streamline these workflows, and as a result, OCR and RPA have become closely linked and are often packaged together.

Repetitive Task Automation

RPA document processing excels at automating repetitive tasks such as invoice processing and patient data management. It uses OCR to read, interpret, transform, and classify documents, while automations or bots load the extracted data into databases and enterprise resource planning systems for use in downstream processes. Because it relies on rule-based logic and predefined templates, RPA is well suited for handling large volumes of structured documents in predictable formats.

Strengths

RPA document processing is highly effective at digitising simple, structured documents. It enables organisations to process documents at scale with low costs and high quality, reduces human error, and allows staff to be redeployed to higher-value tasks.

Limitations

RPA document processing is restricted to simple, structured, and non-variable documents that conform to fixed formats. In practice, most organisations also deal with large volumes of complex, unstructured, or variable documents that RPA cannot handle effectively. Because RPA depends on static rules and templates, it lacks adaptability and requires constant maintenance when document formats change.

Key Characteristics

- Extends OCR capabilities: Builds on OCR to automate data extraction and entry.

- Optimised for structured documents: Well-suited for high-volume, predictable formats such as invoices or forms.

- Strong ROI for simple automations: Delivers cost savings and efficiency in repetitive, rules-based tasks.

- Error reduction: Minimises human transcription errors by automating data handling.

- Broad system integration: Connects with a wide range of enterprise platforms and applications.

- Limited flexibility: Struggles with variability, semi-structured, or unstructured documents.

- Lacks contextual understanding: Cannot interpret meaning or relationships beyond predefined rules.

Example Use Cases for RPA Document Processing

Invoice Processing: A finance department uses RPA document processing to extract invoice data, vendor details, and payment terms. An RPA bot then transfers the extracted data into the organisation's accounting system for approval, reducing manual effort, improving efficiency, and accelerating payment cycles.

Forms Processing: A healthcare provider applies RPA document processing to scan and extract patient information from structured admission forms. An RPA bot transfers the data into the patient management system, reducing administrative errors and shortening wait times.

Generation 3: Intelligent Document Processing

Intelligent Document Processing extends RPA document processing with machine learning technologies such as deep learning, neural networks, machine vision, and natural language processing. These advancements revolutionised how digital text, handwriting, and image-based data are handled, enabling IDP systems to classify documents and extract key information from both structured and semi-structured formats.

Classification

By leveraging machine learning's ability to recognise patterns, IDP provides far more advanced classification capabilities than earlier generations. Information can be routed automatically with minimal human input. For example, IDP can categorise incoming documents such as invoices or contracts and forward them directly to the appropriate department, reducing manual intervention and making it easier to integrate document content into complex business processes.

Data Extraction and Interpretation

Beyond classification, IDP excels at extracting and interpreting document content. With the support of machine learning and NLP, it can analyse and accurately capture key data elements such as names, dates, places, and amounts, providing richer insights for downstream systems.

Limitations

Despite its strengths, IDP depends heavily on training with large volumes of labelled, domain-specific documents, approximately 2,000 for semi-structured and 10,000 for unstructured. This makes IDP expensive to set up and maintain. Its accuracy is strongly tied to the quality and scope of the training set, and performance declines when documents fall outside expected norms. Many vendors also publish misleading accuracy figures that only reflect results within a narrow set of document types.

IDP introduces significant new capabilities but struggles with highly variable or unstructured documents, which limits its effectiveness in complex environments. The integration of machine learning and advanced NLP has raised accuracy to as high as 95 percent, but only for the subset of documents the system has been trained on. In many organisations, these documents represent only about 25 percent of the total incoming volume, leaving roughly 75 percent still requiring human-in-the-loop intervention.

Key Characteristics of Intelligent Document Processing

- Machine learning-driven classification and extraction: Identifies document types and extracts key fields with greater intelligence than rule-based systems.

- Enhanced OCR accuracy: Provides more reliable text recognition compared to traditional OCR.

- Advanced NLP capabilities: Interprets context, entities, and relationships within documents.

- Support for semi-structured documents: Handles formats such as invoices, purchase orders, and forms with variable layouts.

- Reduced human intervention: Requires human-in-the-loop validation for fewer documents.

- High development and maintenance costs: Model training and upkeep demand significant time, data, and resources.

- Limited scalability for complex processes: Struggles to extend beyond semi-structured tasks or highly variable documents.

Example Use Cases for IDP

Insurance Claim Processing: An insurance company uses IDP to process claim forms, classifying documents, extracting key data such as policy numbers and claim amounts, and passing that information to a business process. This improves response times and reduces costs.

Email Processing: A customer service department uses IDP to classify incoming emails based on content and automatically routes them to the correct team. This reduces response times and improves customer satisfaction by ensuring emails are directed appropriately and efficiently.

Generation 4: GPT/LLM Document Processing

Document processing advanced significantly with the advent of transformer-based AI. Generative Pretrained Transformers and large language models introduced deeper contextual understanding, making it easier to extract insights from unstructured text.

Understanding

GPT/LLMs are particularly valuable in the downstream information-processing stages of document workflows. Once documents are ingested, they can interpret both context and content, streamlining tasks that previously required extensive manual effort, such as analysing complex contracts, identifying key clauses, and generating summaries within seconds. Their ability to understand context across multiple languages and domains makes them versatile tools for industries operating in diverse geographies.

Limitations

Although the contextual insights generated by GPT/LLMs are highly valuable, the way these models reach their conclusions can be problematic. Unlike traditional models, which can trace outputs back to specific rules or weights, GPT/LLMs operate largely as black boxes. This lack of transparency makes it difficult to verify their reasoning or assess potential biases and hallucinations. Such challenges are especially concerning in sensitive domains such as legal or healthcare document processing, where errors can have serious consequences.

Explainability concerns are further compounded by context blindness to layout and visual cues, as well as the risk of error amplification when reasoning over poorly structured inputs.

Costs

Deploying GPT/LLMs for document processing often requires fine-tuning for specific industries and tasks. This process is expensive and time-consuming, requiring domain expertise and large volumes of labelled training data. In addition, these models demand significant computational resources at inference, making large-scale deployments costly.

Key Characteristics of GPT/LLM Document Processing

- Contextual understanding: Interprets meaning beyond keywords, capturing nuance and intent in documents.

- Multilingual capability: Processes and analyses content across multiple languages with high accuracy.

- Domain adaptability: Applies knowledge flexibly across industries and subject areas without retraining for every use case.

- Summarisation and insight generation: Produces concise overviews, extracts key points, and identifies patterns within large text sets.

- Strength in unstructured data: Excels at handling unstructured and semi-structured documents such as emails, contracts, and reports.

- Explainability challenges: Functions as a black box, making it difficult to trace reasoning or validate outputs.

- High computational demand: Requires substantial resources for training and inference, driving up operational costs.

Example Use Cases for LLM/GPT Document Processing

Mortgage Applications: A financial services company leverages LLM/GPT document processing to extract critical information such as income, assets, and liabilities from lengthy mortgage applications, streamlining a traditionally time-consuming step and accelerating approvals.

Improved Patient Outcomes: A hospital applies LLM/GPT document processing to analyse clinical notes, extract key patient data, and suggest preliminary diagnoses from patient records, improving the use of hospital resources and enabling patients to receive better care faster.

Generation 5: Cognitive Document Processing

CDP is a new technology that extends the capabilities of previous generations of document processing, enabling it to handle a broader range of documents. It leverages Cognitive AI, which integrates visual AI for perception, predictive AI for forecasting, and agentic AI for reasoning and tool use. This combination allows CDP to extract, normalise, and contextualise information in a zero-shot manner, requiring no training.

Perception Foundation: Why Visual AI First?

Before reasoning or orchestration, automation must first perceive what is in front of it. Visual AI creates a structured understanding of documents and application screens by capturing text, layout, tables, labels, and context. As a result, downstream components receive clean, well-typed inputs instead of raw pixels or brittle templates. This perception-first layer stabilises automation as formats and policies change, reduces maintenance costs, and transforms previously variable work into repeatable inputs for predictive and agentic steps.

The perception, contextualisation, and understanding provided by visual technologies complement the reasoning and execution capabilities of agentic AI, delivering stronger results while consuming fewer computational resources. This approach mirrors how the human brain works: visual systems act as a cognitive pre-processor, applying element grouping, pattern recognition, and synthesis to integrate diverse information, context, and perspectives into a unified understanding. This filtered, contextualised information can then be passed to agentic systems for deeper analysis and reasoning.

Zero-Shot Capability

Because of its cognitive preprocessing, CDP can extract high-quality data from documents in a zero-shot manner without model training. This significantly reduces development, deployment, and runtime costs, accelerating time-to-value.

Multi-System Analysis

CDP leverages multiple technologies including machine vision, advanced OCR, deep learning, advanced NLP, semantic analysis, large vision models, and vision-language models. These systems work together to provide comprehensive document analysis and understanding. To mitigate tool deficiencies and reduce the risks of model bias, CDP combines outputs through refinement, extraction, and best-result analysis.

Contextual Metadata

The capture of context and relationship metadata improves extraction accuracy while also increasing the inherent value of the information. By enriching document content with contextual insights, CDP enhances decision-making and strengthens downstream business processes.

Self-Correcting

The multi-tool approach, combined with improved contextual understanding and recognition of relationships between data elements, creates a foundation for continuous learning. Over time, CDP systems can self-correct and adapt, becoming more flexible, maintainable, and effective at handling document variability and complexity.

Lower Costs

Once data is preprocessed, CDP can apply advanced techniques including machine vision, fuzzy logic, vision-language models, LLMs, and other cognitive methods to extract information. This cognitive systems approach allows CDP to process more complex documents at higher quality and lower cost than IDP or GPT/LLM-only solutions.

Key Features of Cognitive Document Processing

- Multi-tool architecture: Combines complementary tools and models to maximise accuracy and resilience.

- Deep learning OCR: Provides superior text recognition across varied fonts, formats, and quality levels.

- Advanced NLP and machine vision: Enables semantic analysis, entity recognition, and visual context interpretation.

- Large vision and vision-language models: Enhances cross-modal understanding of documents by linking visual and textual elements.

- Context-aware data extraction: Interprets information based on surrounding content and relationships.

- Semantic understanding: Goes beyond raw text capture to understand meaning, structure, and intent.

- Unstructured document handling: Processes variable, complex documents such as contracts, research papers, and legal briefs.

- Zero-shot extraction: No training datasets, labelled samples, or template configuration required.

- Tolerance for variability: Handles diverse document formats, policies, and layouts with ease.

- Faster deployment, lower costs: Reduces development, training, and maintenance overhead.

- Improved data quality: Enhances accuracy, consistency, and reliability of extracted information.

Example Use Cases for CDP

Contract Analysis: A legal department leverages CDP to analyse complex contracts, automatically identifying key clauses, obligations, and risks. The system highlights areas requiring review or negotiation, reducing the workload of legal teams and allowing them to focus on higher-value strategic tasks.

Regulatory Policy Enforcement: An energy company applies CDP to ensure compliance with environmental and safety regulations by automating the extraction and analysis of critical data from inspection reports, permits, environmental impact assessments, safety audits, and emissions logs. The system cross-references extracted data against regulatory standards and flags anomalies or potential non-compliance issues in real time.

Generation 6: Ecosystem Information Management

The future of document processing is likely to evolve into Ecosystem Information Management. EIM extends CDP/CPA capabilities across firms through shared data and control planes, with blockchain-backed fabrics providing identity and provenance. Unlike earlier generations, which focused on departmental or use case-specific automation, EIM aims to create a fully interconnected environment that enhances collaboration and enables real-time decision-making across business networks.

Cross-Ecosystem Workflows

EIM will process documents in real time as part of end-to-end automated workflows spanning multiple systems and organisations. These systems move beyond simply extracting data. They treat documents as components of an interconnected information ecosystem rather than isolated data sources. This perspective enables more comprehensive, ecosystem-wide decision-making.

Data Fabric

EIM relies on advanced cognitive and reasoning AI, real-time data sharing, agentic workflows, and interoperability via a shared data fabric, often supported by blockchain infrastructure. This enables organisations to operate in highly coordinated, efficient, and collaborative configurations. Blockchain services add essential identity and reputation mechanisms, giving participants confidence in both the authenticity of documents and the integrity of associated metadata.

Efficiency

EIM reduces manual input and duplication in workflows such as data entry and document verification. It manages documents and their embedded information as part of a broader, integrated process and can process and analyse documents in real time. By linking multiple businesses through shared systems, EIM eliminates silos and improves overall operational efficiency.

Challenges

While EIM promises radical efficiency gains and innovation at scale, it requires substantial investment in infrastructure, process redesign, and the development of interoperability standards and decentralised governance systems. Technical hurdles include integrating diverse systems, ensuring data compatibility across platforms, protecting data privacy, and coordinating among stakeholders with varying technological capabilities.

Key Features of Ecosystem Information Management

- Holistic integration: Connects workflows and data across ecosystem partners for seamless collaboration.

- Shared data environment: Provides a trusted, unified platform for information exchange.

- Real-time document processing: Embeds automated document handling directly into business systems.

- Real-time analytics and decision support: Delivers instant insights to guide operations and strategy.

- AI-to-AI collaboration: Enables autonomous agents from different organisations to coordinate and share insights.

- Interoperability: Integrates smoothly with multiple backend systems across the ecosystem.

- Ecosystem-wide efficiency: Enhances speed, accuracy, and productivity across interconnected enterprises.

- Security and privacy management: Ensures compliance, governance, and protection of sensitive information.

Example Use Cases for EIM

Procurement Automation: A multinational corporation with complex multiparty supply chains might use EIM to automate an entire procurement process, from receiving purchase orders to negotiating contracts, managing suppliers, and processing payments. This can significantly reduce procurement cycle times and minimise human errors.

Legal Compliance: A financial institution facilitating trade finance might use EIM to automatically analyse and process regulatory documents across multiple jurisdictions, ensuring real-time compliance with changing legal requirements. This helps avoid penalties and improves audit readiness.

Metrics That Matter

To avoid the legacy plateau, planning driven by inflated accuracy on easy cases and hidden exception costs, ground your automation programme in a concise, outcome-focused set of metrics.

Useful Accuracy (across all documents): Correct field and decision outputs divided by total items, including exceptions and edge cases. Counters selective STP-only claims and reflects true business quality.

Straight-Through Processing Rate: Cases completed with zero human intervention divided by total cases. Measures actual autonomy and end-user impact in terms of speed and effort.

Time-to-Adapt: Elapsed time from a breaking change in UI, policy, or vendor to restored SLA and accuracy. Captures agility and limits the cost of downtime.

Maintenance Load: Rules, templates, and models edited per month plus operational hours spent on upkeep, divided by total operational hours. A direct proxy for the ongoing cost of ownership.

Resilience Index: Post-change throughput in the first 7 to 14 days divided by baseline throughput. Demonstrates how well automations endure real-world drift.

Trace and Lineage Completeness: Percentage of cases with a full perception, prediction, reasoning, and action record including inputs, versions, tool calls, and decisions. Enables auditability, regulatory compliance, and faster root-cause analysis.

Conclusion

The evolution of document processing technologies, from OCR and RPA to IDP, GPT/LLM processing, CDP, and eventually EIM, has been driven by the pursuit of greater efficiency, accuracy, and scalability in managing ever-growing volumes of business-critical information. OCR and RPA introduced the first wave of automation for simple, rule-based tasks. IDP added machine learning to handle semi-structured data, while GPT/LLMs brought contextual language understanding. CDP's perception-first approach advances the field with adaptability, continuous learning, and self-correction without extensive training. EIM is the logical network-level extension of CDP: a fully interconnected and collaborative environment built on next-generation digital infrastructure, where all prior innovations converge.

As organisations adopt more advanced document processing technologies, they gain the ability to manage increasingly complex and unstructured documents with less human intervention. This shift amplifies the value of document-derived insights and enables faster, more reliable business processes.

Businesses implementing solutions such as CDP can unlock significant operational efficiencies and competitive advantages, including reduced processing costs, accelerated decision-making, and improved agility. However, realising these benefits requires more than just technology. Success depends on thoughtful planning, investment, employee training, process redesign, and effective change management.

Ultimately, the question for most organisations is not whether to automate document processing, but how far and how quickly they must advance to remain competitive in an AI-driven future.

About Syncura

Syncura builds cognitive automation for the documents and processes that defeat conventional automation. Our Cognitive Document Processor and Cognitive Process Automation platform are designed for the high-variability, exception-heavy workflows at the core of financial services, insurance, healthcare, and supply chain operations.

Where conventional automation manages variability through exception queues, retraining cycles, and maintenance overhead, Syncura resolves it within a governed processing architecture. The result is automation that is deterministic, auditable, and durable as environments change.

For more information, visit syncura.ai or contact us at info@syncura.ai.